Explore and celebrate the human history of our oceans and rivers.

Stay up to date with our latest news, events and offers.

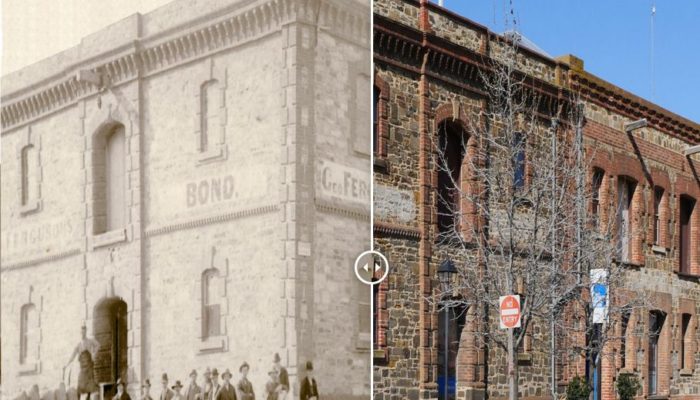

An interactive way to engage with the history of our city; the people, the communities, the stories and the places.

South Australian Maritime Museum’s walking tour app that explores the historic precinct of Port Adelaide.